ML and NLP Research Highlights of 2021

This post summarizes progress across multiple impactful areas in ML and NLP in 2021.

Credit for the title image: Liu et al. (2021)

2021 saw many exciting advances in machine learning (ML) and natural language processing (NLP). In this post, I will cover the papers and research areas that I found most inspiring. I tried to cover the papers that I was aware of but likely missed many relevant ones. Feel free to highlight them as well as ones that you found inspiring in the comments. I discuss the following highlights:

- Universal Models

- Massive Multi-task Learning

- Beyond the Transformer

- Prompting

- Efficient Methods

- Benchmarking

- Conditional Image Generation

- ML for Science

- Program Synthesis

- Bias

- Retrieval Augmentation

- Token-free Models

- Temporal Adaptation

- The Importance of Data

- Meta-learning

1) Universal Models

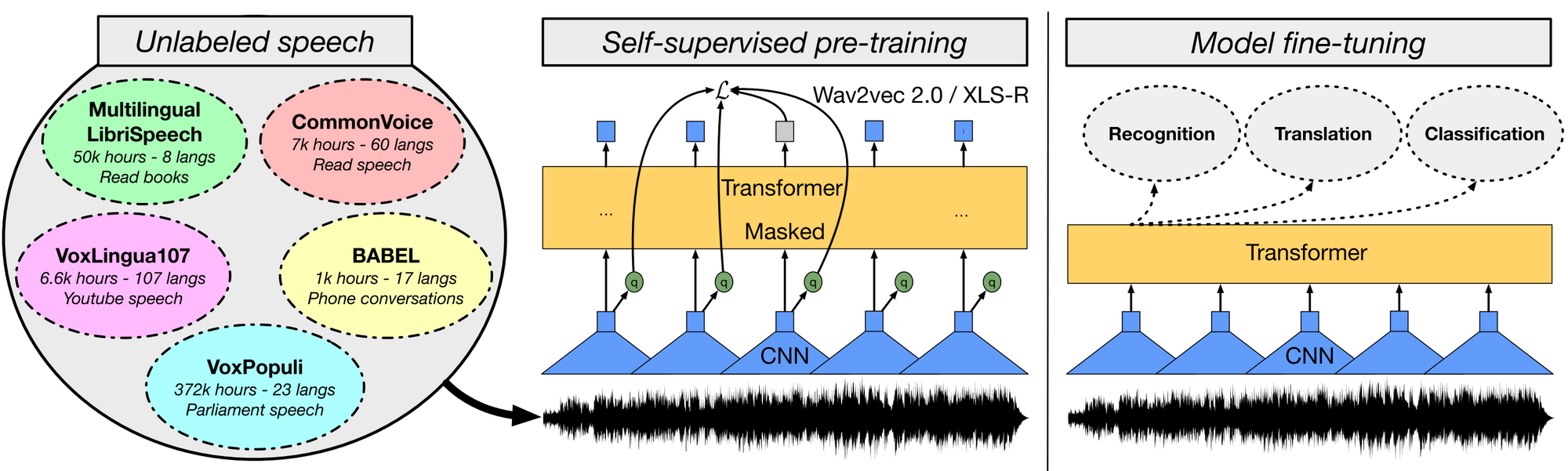

What happened? 2021 saw the continuation of the development of ever larger pre-trained models. Pre-trained models were applied in many different domains and started to be considered critical for ML research [1]. In computer vision, supervised pre-trained models such as Vision Transformer [2] have been scaled up [3] and self-supervised pre-trained models have started to match their performance [4]. The latter have been scaled beyond the controlled environment of ImageNet to random collections of images [5]. In speech, new models have been built based on wav2vec 2.0 [6] such as W2v-BERT [7] as well as more powerful multilingual models such as XLS-R [8]. At the same time, we saw new unified pre-trained models for previously under-researched modality pairs such as for videos and language [9] as well as speech and language [10]. In vision and language, controlled studies shed new light on important components of such multi-modal models [11][12]. By framing different tasks in the paradigm of language modelling, models have had great success also in other domains such as reinforcement learning [13] and protein structure prediction [14]. Given the observed scaling behaviour of many of these models, it has become common to report performance at different parameter sizes. However, increases in pre-training performance do not necessarily translate to downstream settings [15][16].

Why is it important? Pre-trained models have been shown to generalize well to new tasks in a given domain or modality. They demonstrate strong few-shot learning behaviour and robust learning capabilities. As such, they are a valuable building block for research advances and enable new practical applications.

What’s next? We will undoubtedly see more and even larger pre-trained models developed in the future. At the same time, we should expect individual models to perform more tasks at the same time. This is already the case in language where models can perform many tasks by framing them in a common text-to-text format. Similarly, we will likely see image and speech models that can perform many common tasks in a single model. Finally, we will see more work that trains models for multiple modalities.

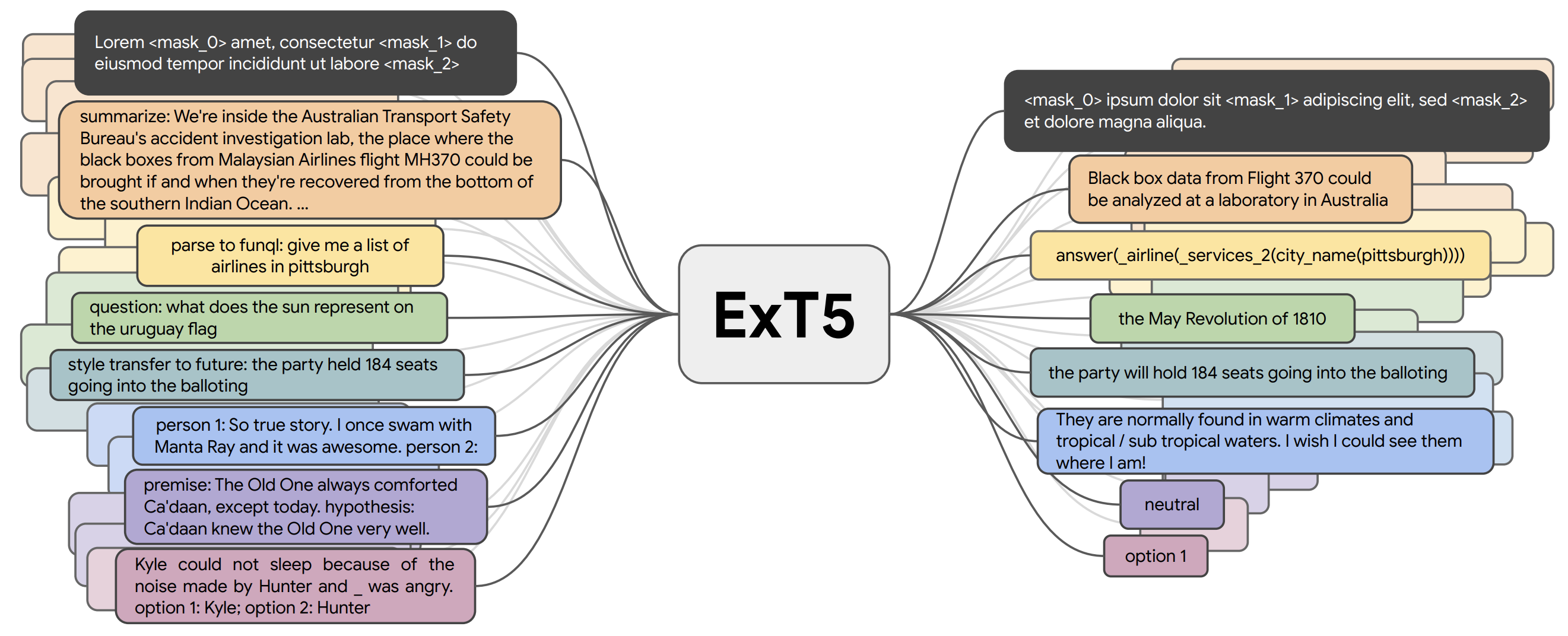

2) Massive Multi-task Learning

What happened? Most pre-trained models in the previous section are self-supervised. They generally learn from large amounts of unlabelled data via an objective that does not require explicit supervision. However, for many domains large amounts of labelled data are already available, which can be used to learn better representations. So far, multi-task models such as T0 [17], FLAN [18], and ExT5 [19] have been pre-trained on around 100 tasks mainly for language. Such massive multi-task learning is closely related to meta-learning. Given access to a diverse task distribution [20], models can learn to learn different types of behaviour such as how to do in-context learning [21].

Why is it important? Massive multi-task learning is possible due to the fact that many recent models such as T5 and GPT-3 use a text-to-text format. Models thus no longer require hand-engineered task-specific loss functions or task-specific layers in order to effectively learn across multiple tasks. Such recent approaches highlight the benefit of combining self-supervised pre-training with supervised multi-task learning and demonstrate that a combination of both leads to models that are more general.

What’s next? Given the availability and open-source nature of datasets in a unified format, we can imagine a virtuous cycle where newly created high-quality datasets are used to train more powerful models on increasingly diverse task collections, which could then be used in-the-loop to create more challenging datasets.

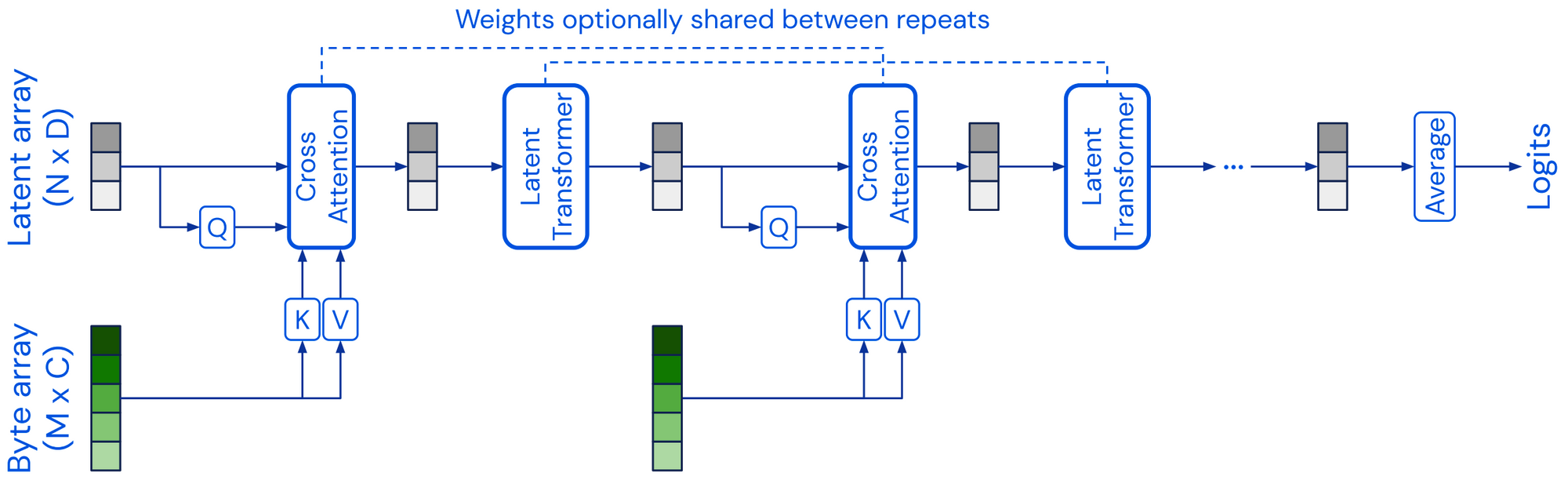

3) Beyond the Transformer

What happened? Most pre-trained models discussed in the previous sections build on the transformer architecture [22]. 2021 saw the development of alternative model architectures that are viable alternatives to the transformer. The Perceiver [23] is a transformer-like architecture that scales to very high-dimensional inputs by using a latent array of a fixed dimensionality as its base representation and conditioning this on the input via cross-attention. Perceiver IO [24] extended the architecture to also deal with structured output spaces. Other models have tried to replace the ubiquituous self-attention layer, most notably using multilayer perceptrons (MLPs) such as in the MLP-Mixer [25] and gMLP [26]. Alternatively, FNet [27] uses 1D Fourier Transforms instead of self-attention to mix information at the token level. In general, it is useful to think of an architecture as decoupled from the pre-training strategy. If CNNs are pre-trained the same way as transformer models, they achieve competitive performance on many NLP tasks [28]. Similarly, using alternative pre-training objectives such as ELECTRA-style pre-training [29] may lead to gains [30].

Why is it important? Research progresses by exploring many complementary or orthogonal directions at the same time. If most research focuses on a single architecture, this will inevitably lead to bias, blind spots, and missed opportunities. New models may address some of the transformers' limitations such as the computational complexity of attention, its black-box nature, and order-agnosticity. For instance, neural extensions of generalized additive models offer much better interpretability compared to current models [31].

What’s next? While pre-trained transformers will likely continue to be deployed as standard baselines for many tasks, we should expect to see alternative architectures particularly in settings where current models fail short, such as modeling long-range dependencies and high-dimensional inputs or where interpretability and explainability are required.

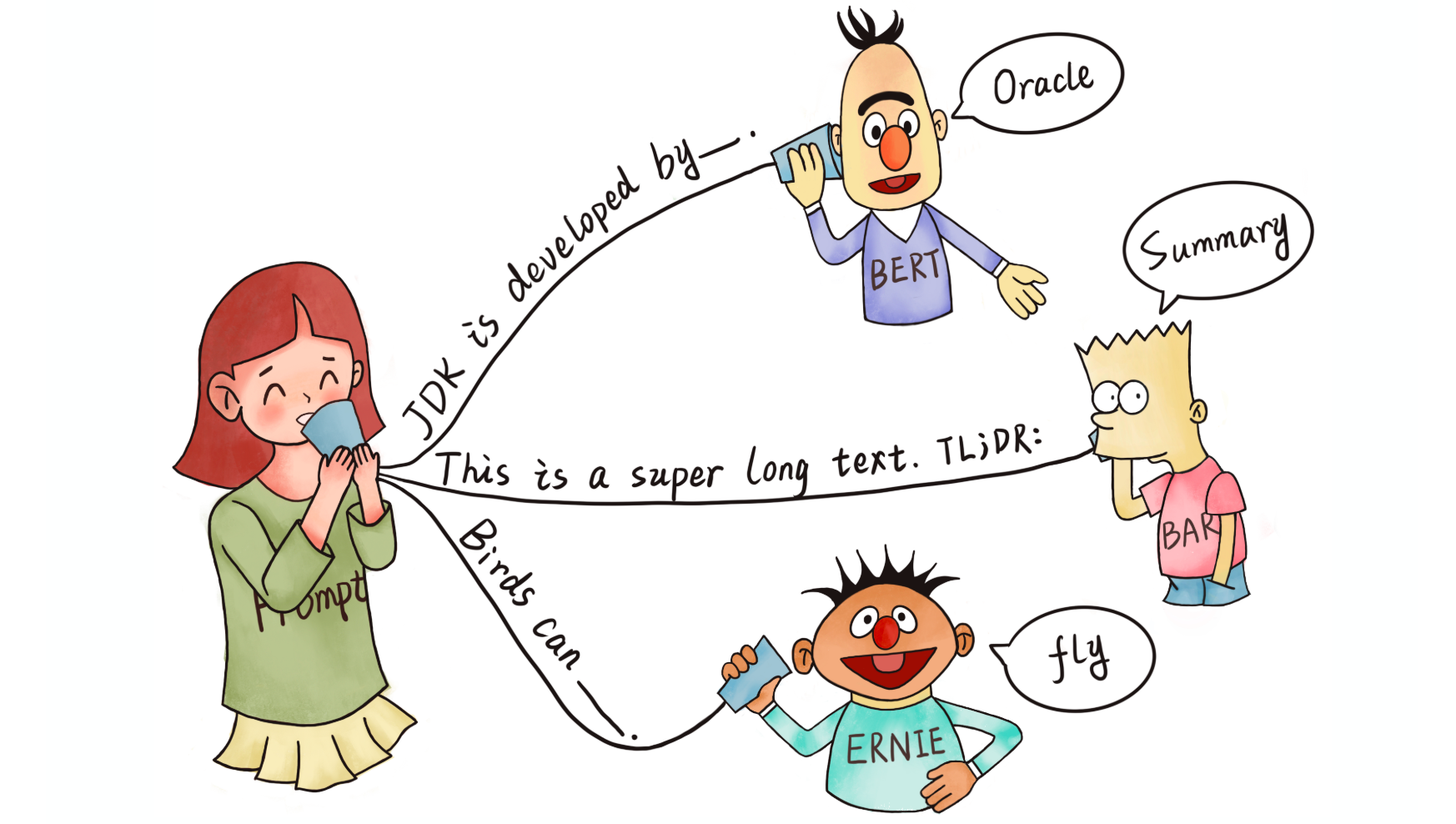

4) Prompting

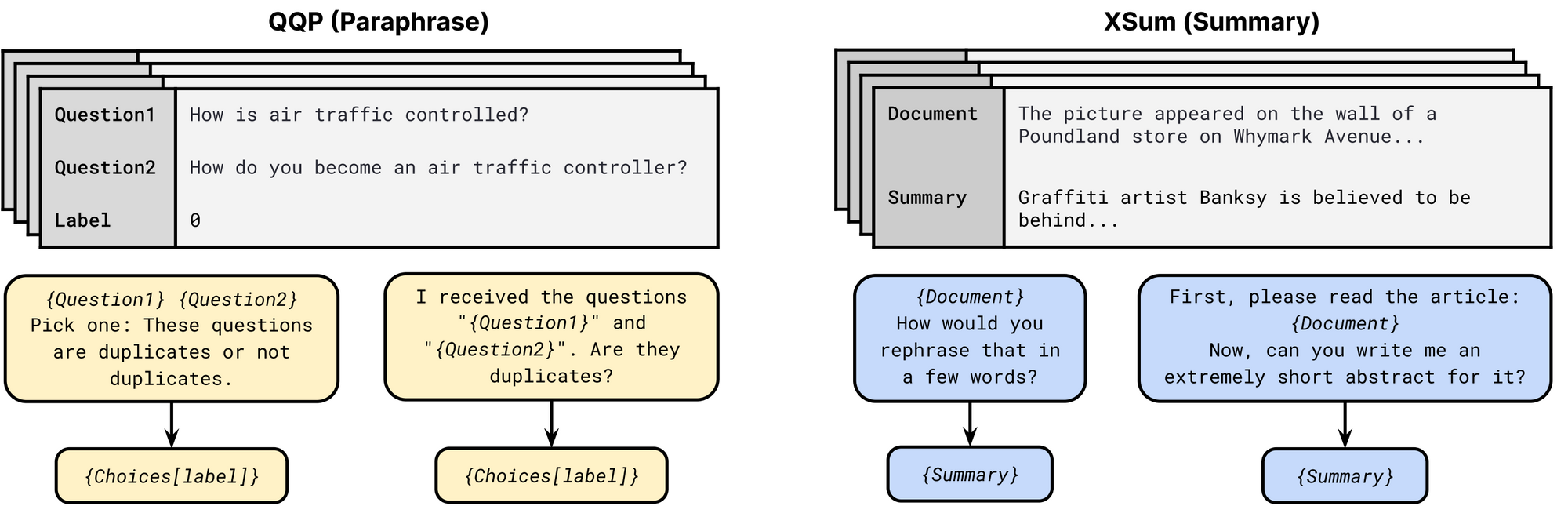

What happened? Popularized by GPT-3 [32], prompting has emerged as a viable alternative input format for NLP models. Prompts typically include a pattern that asks the model to make a certain prediction and a verbalizer that converts the prediction to a class label. Several approaches such as PET, [33] iPET [34], and AdaPET [35] leverage prompts for few-shot learning. Prompts are not a silver bullet, however. Models' performance varies drastically depending on the prompt and finding the best prompt still requires labeled examples [36]. In order to compare models reliably in a few-shot setting, new evaluation procedures have been developed [37]. A large number of prompts are available as part of the public pool of prompts (P3), enabling exploration of the best way to use prompts. This survey [38] provides an excellent overview of the general research area.

Why is it important? A prompt can be used to encode task-specific information, which can be worth up to 3,500 labeled examples, depending on the task [39]. Prompts thus an enable a new way to incorporate expert information into model training, beyond manually labeling examples or defining labeling functions [40].

What’s next? We have only scratched the surface of using prompts to improve model learning. Prompts will become more elaborate, for instance including longer instructions [18:1] as well as positive and negative examples [41] and general heuristics. Prompts may also be a more natural way to incorporate natural language explanations [42] into model training.

5) Efficient Methods

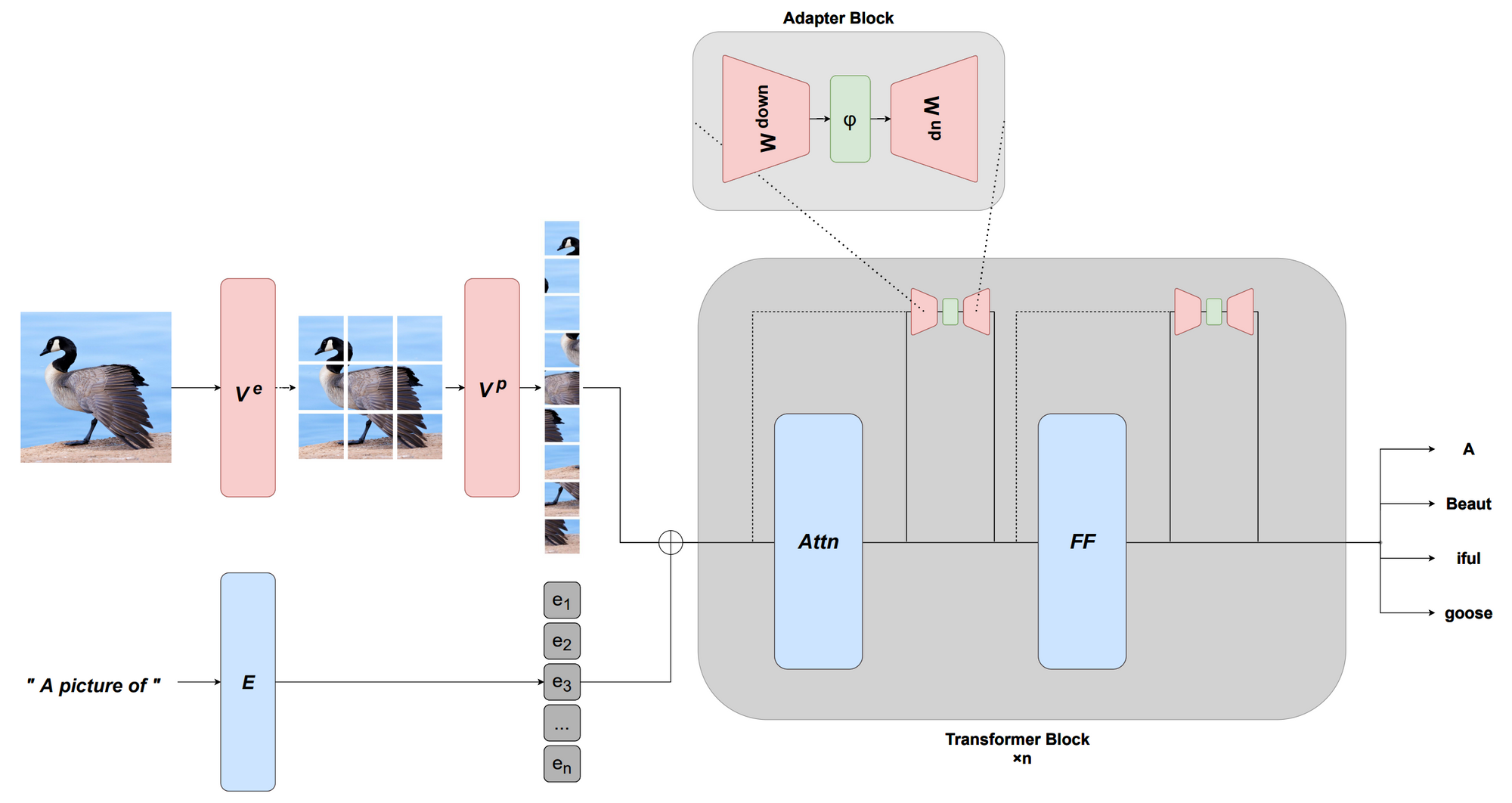

What happened? A downside of pre-trained models is that they are generally very large and often inefficient to use in practice. 2021 brought advances both in more efficient architectures as well as in more efficient fine-tuning methods. On the modeling side, we saw several more efficient versions of self-attention [43][44]. This survey [45] provides an overview of pre-2021 models. Current pre-trained models are so powerful that they can be effectively conditioned by only updating few parameters, which has led to the development of more efficient fine-tuning approaches based on continuous prompts [46][47] and adapters [48][49][50], among others. This capability also enables adaptation to new modalities by learning an appropriate prefix [51] or suitable transformations [52][53]. Other methods such as quantization for creating more efficient optimizers [54] as well as sparsity have also been used.

Why is it important? Models are not useful if they are infeasible or prohibitively expensive to run on standard hardware. Advances in efficiency will ensure that while models are growing larger, they will be benefical and accessible to practicioners.

What’s next? Efficient models and training methods should become easier to use and more accessible. At the same time, the community will develop more effective ways to interface with large models and to efficiently adapt, combine or modify them without having to pre-train a new model from scratch.

6) Benchmarking

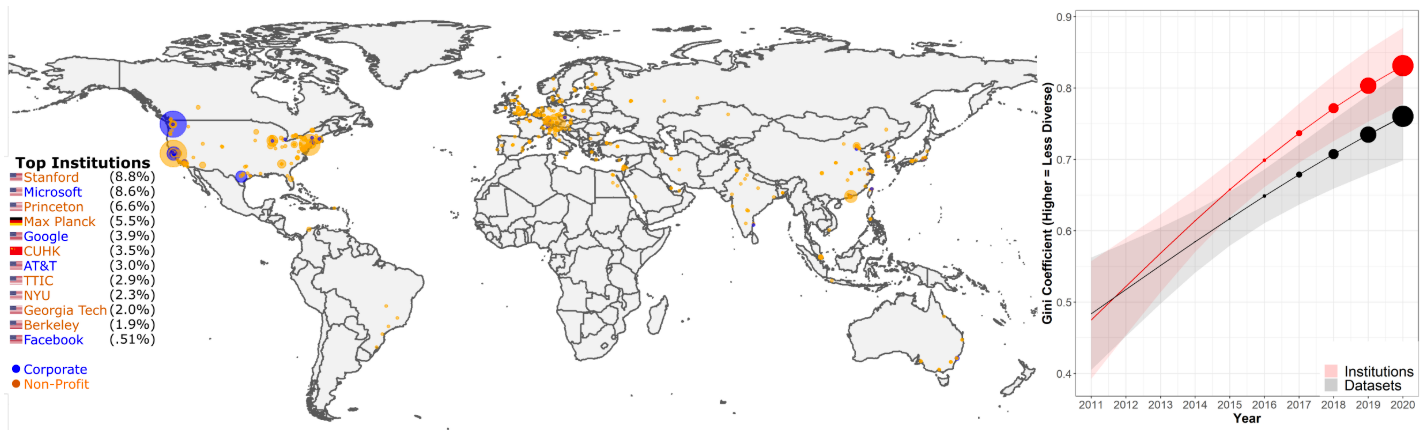

What happened? The rapidly improving capabilities of recent ML and NLP models have outpaced the ability of many benchmarks to measure them. At the same time, communities evaluate on fewer and fewer benchmarks, which originate from a small number of elite institutions [55]. Consequently, 2021 saw much discussion of best practices and ways in which we can reliably evaluate such models going forward, which I cover in this blog post. Notable leaderboard paradigms that emerged in 2021 in the NLP community are dynamic adversarial evaluation [56], community-driven evaluation where community members collaborate on creating evaluation datasets such as BIG-bench, interactive fine-grained evaluation across different error types [57], and multi-dimensional evaluation that goes beyond evaluating models on a single performance metric [58]. In addition, new benchmarks were proposed for influential settings such as few-shot evaluation [59][60] and cross-domain generalization [61]. We also saw new benchmarks focused on evaluating general-purpose pre-trained models, for specific modalities such as speech [62] and specific languages, for instance, Indonesian and Romanian [63][64], as well as across modalities [65] and in a multilingual setting [66]. We also should pay more attention to evaluation metrics. A machine translation (MT) meta-evaluation [67] revealed that among 769 MT papers of the last decade, 74.3% only used BLEU, despite 108 alternative metrics—often with better human correlation—having been proposed. Recent efforts such as GEM [68] and bidimensional leaderboards [69] thus propose to evaluate models and methods jointly.

Why is it important? Benchmarking and evaluation are the linchpins of scientific progress in machine learning and NLP. Without accurate and reliable benchmarks, it is not possible to tell whether we are making genuine progress or overfitting to entrenched datasets and metrics.

What’s next? Increased awareness around issues with benchmarking should lead to a more thoughful design of new datasets. Evaluation of new models should also focus less on a single performance metric but take multiple dimensions into account, such as a model's fairness, efficiency, and robustness.

7) Conditional image generation

What happened? Conditional image generation, i.e., generating images based on a text description, saw impressive results in 2021. An art scene emerged around the most recent generation of generative models (see this blog post for an overview). Rather than generating an image directly based on a text input as in the DALL-E model [70], recent approaches steer the output of a powerful generative model such as VQ-GAN [71] using a joint image-and-text embedding model such as CLIP [72]. Likelihood-based diffusion models, which gradually remove noise from a signal have emerged as powerful new generative models that can outperform GANs [73]. By guiding their outputs based on text inputs, recent models are approaching photorealistic image quality [74]. Such models are also particularly good at inpainting and can modify regions of an image based on a description.

Why is it important? Automatic generation of high quality images that can be guided by users opens a wide range of artistic and commercial applications, from the automatic design of visual assets, model-assisted prototyping and design, personalization, etc.

What’s next? Sampling from recent diffusion-based models is much slower compared to their GAN-based counterparts. These models require improvements in efficiency to make them useful for real-world applications. This area also requires more research in human-computer interaction, to identify the best ways and applications where such models can assist humans.

8) ML for Science

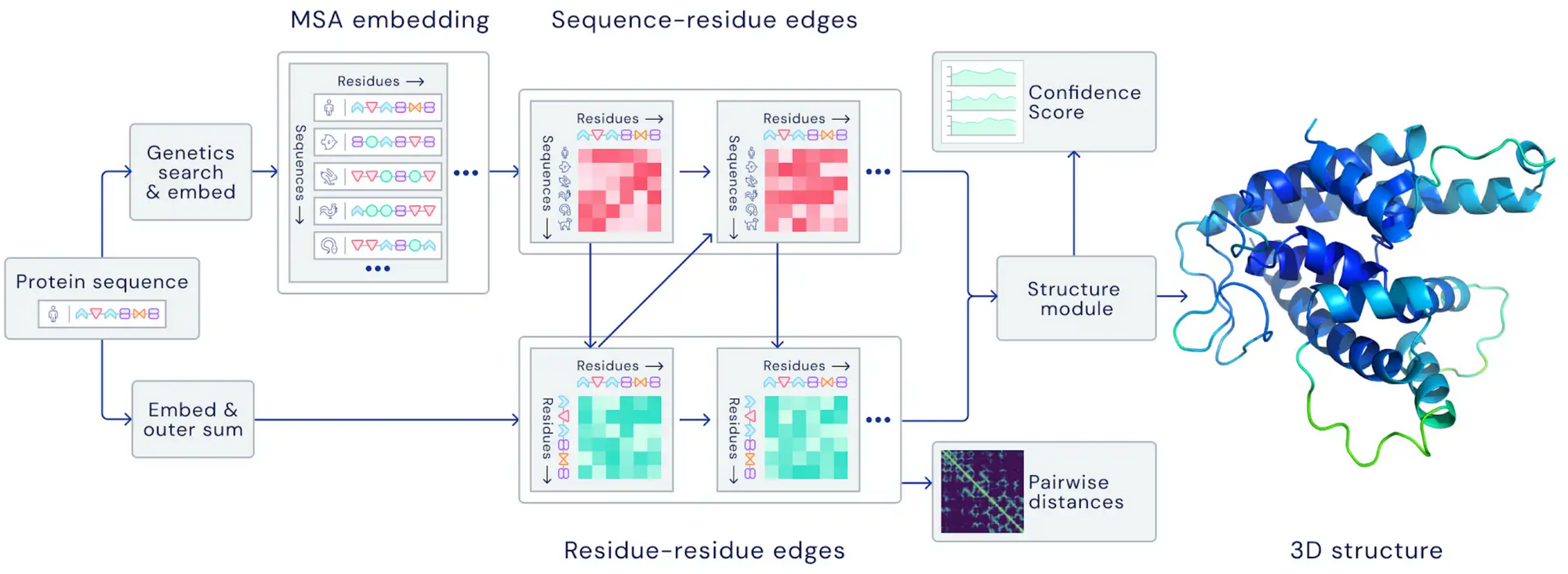

What happened? 2021 saw several breakthroughs in ML applied to advance the natural sciences. In meteorology, advances in precipitation nowcasting and forecasting [75][76] led to substantial improvements in forecast accuracy. In both cases, models outperformed state-of-the-art physics-based forecast models. In biology, AlphaFold 2.0 managed to predict the structure of proteins with unprecedented accuracy, even in cases where no similar structure is known [14:1]. In mathematics, ML was shown to be able to guide the intuition of mathematicians in order to discover new connections and algorithms [77]. Transformer models have also been shown to be capable of learning mathematical properties of differential systems such as local stability when trained on sufficient amounts of data [78].

Why is it important? Using ML for advancing our understanding and applications in natural sciences is one of its most impactful applications. Using powerful ML methods enables both new applications and can greatly speed up existing ones such as drug design.

What’s next? Using models in-the-loop to assist researchers in the discovery and development of new advances is a particularly compelling direction. It requires both the development of powerful models as well as work on interactive machine learning and human-computer interaction.

9) Program synthesis

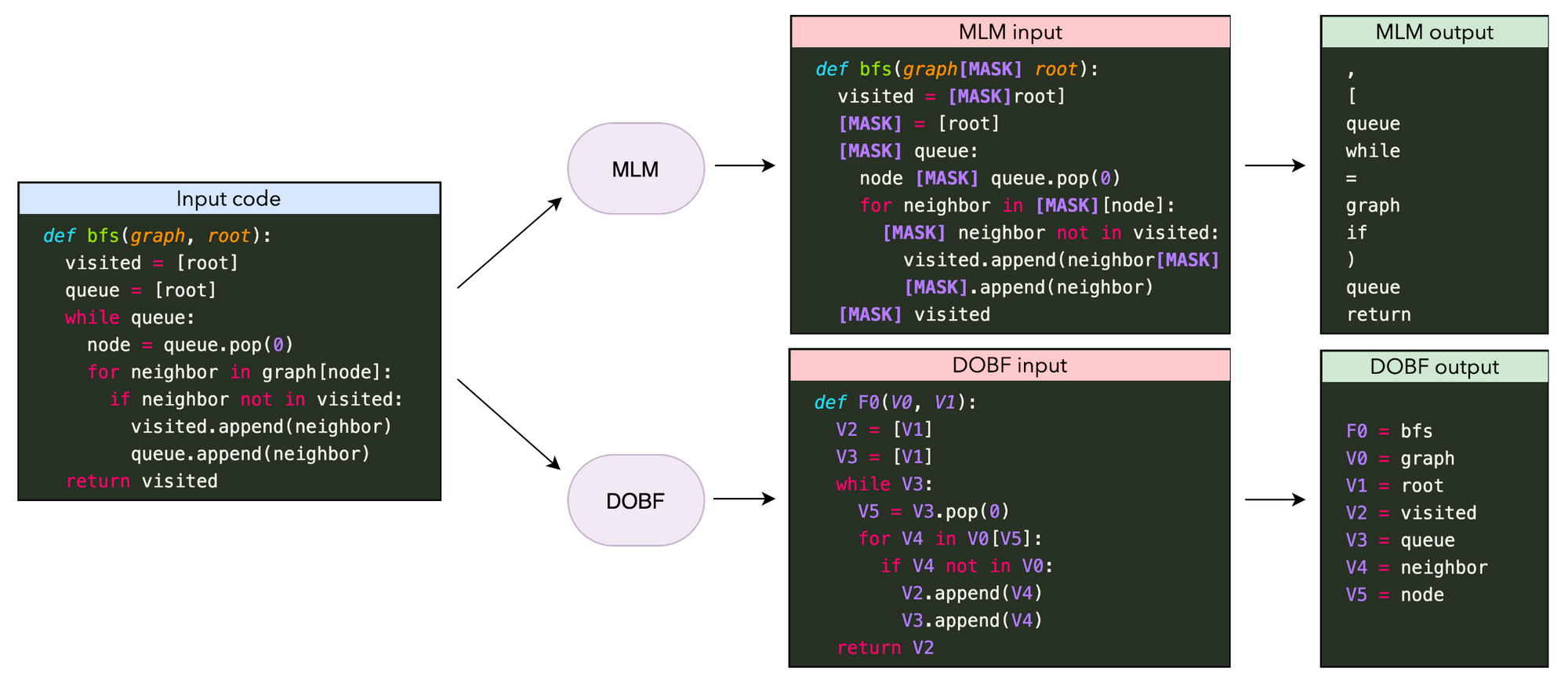

What happened? One of the most notable applications of large language models this year was code generation, which saw with Codex [79] its first integration into a major product as part of GitHub Copilot. Other advances in pre-training models ranged from better pre-training objectives [80][81] to scaling experiments [82][83]. Generating complex and long-form programs is still a challenge for current models, however. An interesting related direction is learning to execute or model programs, which can be improved by performing multi-step computation where intermediate computation steps are recorded in a "scratchpad" [84].

Why is it important? Being able to automatically synthesize complex programs is useful for a wide variety of applications such as supporting software engineers.

What’s next? It is still an open question how much code generation models improve the workflow of software engineers in practice [85]. In order to be truly helpful, such models—similarly to dialogue models—need to be able to update their predictions based on new information and need to take the local and global context into account.

10) Bias

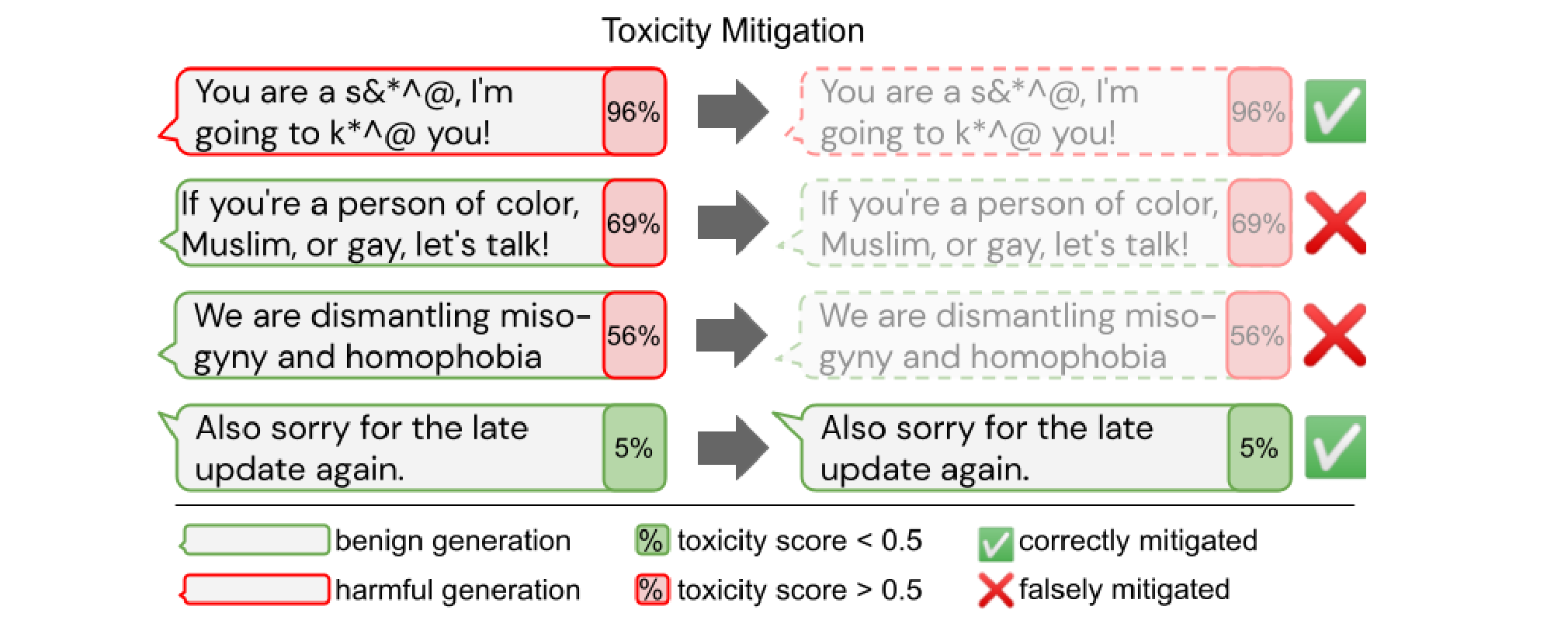

What happened? Given the potential impact of large pre-trained models, it is crucial that they do not contain harmful biases, are not misused to generate harmful content, and are used in a sustainable manner. Several reviews [1:1][86][87] highlight the potential risks of such models. Bias has been investigated with regard to protected attributes such as gender, particular ethnic groups, and political leaning [88][89]. Removing bias from models such as toxicity, however, comes with trade-offs and can lead to reduced coverage for texts about and authored by marginalized groups [90].

Why is it important? In order to use models in real-world applications, they should not exhibit any harmful bias and not discriminate against any group. Developing a better understanding of the biases of current models and how to remove them is thus crucial for enabling safe and responsible deployment of ML models.

What’s next? Bias has so far been mostly explored in English and in pre-trained models and for specific text generation or classification applications. Given the intended use and lifecycle of such models, we should also aim to identify and mitigate bias in a multilingual setting, with regard to the combination of different modalities, and at different stages of a pre-trained model's usage—after pre-training, after fine-tuning, and at test time.

11) Retrieval Augmentation

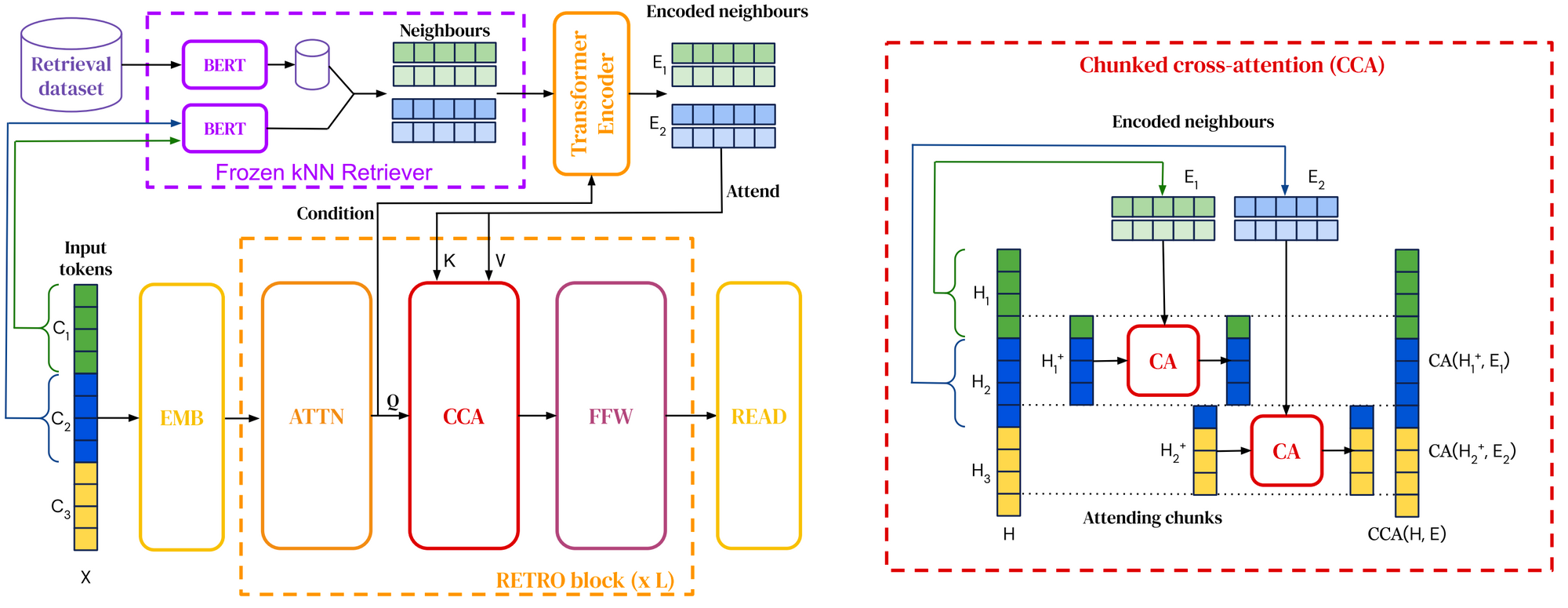

What happened? Retrieval-augmented language models, which integrate retrieval into pre-training and downstream usage, have already featured in my highlights of 2020. In 2021, retrieval corpora have been scaled up to a trillion tokens [91] and models have been equipped with the ability to query the web for answering questions [92][93]. We have also seen new ways to integrate retrieval into pre-trained language models [94][95].

Why is it important? Retrieval augmentation enables models to be much more parameter-efficient as they need to store less knowledge in their parameters and can instead retrieve it. It also enables effective domain adaptation by simply updating the data used for retrieval [96].

What’s next? We might see different forms of retrieval to leverage different kinds of information such as common sense knowledge, factual relations, linguistic information, etc. Retrieval augmentation could also be combined with more structured forms of knowledge retrieval, such as methods from knowledge base population and open information extraction.

12) Token-free Models

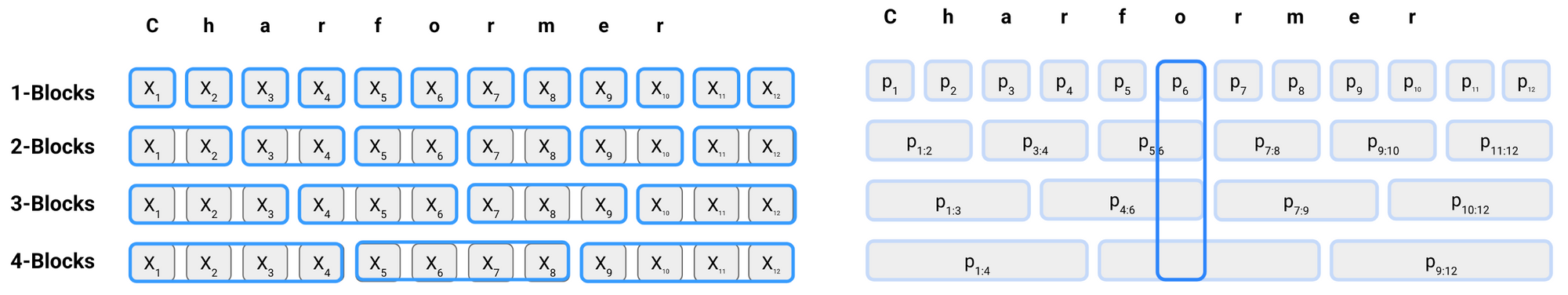

What happened? 2021 saw the emergence of new token-free methods that directly consume a sequence of characters [97][98][99]. These models have been demonstrated to outperform multilingual models and perform particularly well on non-standard language. They are thus a promising alternative to the entrenched subword-based transformer models (see this newsletter for a coverage of these 'Char Wars').

Why is it important? Since pre-trained language models like BERT, a text consisting of tokenized subwords has become the standard input format in NLP. However, subword tokenization has been shown to perform poorly on noisy input, such as on typos or spelling variations common on social media, and on certain types of morphology. In addition, it imposes a dependence on the tokenization, which can lead to a mismatch when adapting a model to new data.

What’s next? Due to their increased flexibility, token-free models are better able to model morphology and may generalize better to new words and language change. It is still unclear, however, how they fare compared to subword-based methods on different types of morphological or word formation processes and what trade-offs these models make.

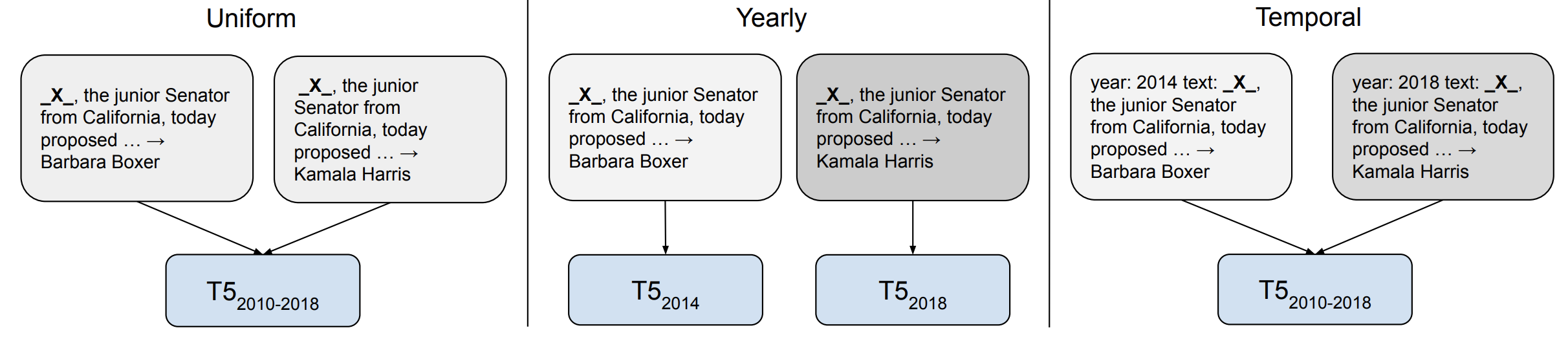

13) Temporal Adaptation

What happened? Models are biased in many ways based on the data that they are trained on. One of these biases that has received increasing attention in 2021 is a bias regarding the timeframe of the data the models have been trained on. Given that language continuously evolves and new terms enter the discourse, models that are trained on outdated data have been shown to generalize comparatively poorly [100]. When temporal adaptation is useful, however, may depend on the downstream task. For instance, it may be less helpful for tasks where event-driven changes in language use are not relevant for task performance [101].

Why is it important? Temporal adaptation is particularly important for question answering where answers to a question may change depending on when the question was asked [102][103].

What’s next? Developing methods that can adapt to new timeframes requires moving away from the static pre-train–fine-tune setting and requires efficient ways to update the knowledge of pre-trained models. Both efficient methods as well as retrieval augmentation are useful in this regard. It also requires developing models for which the input does not exist in a vacuum but is grounded to extra-linguistic context and the real world. For more work on this topic, check out the EvoNLP workshop at EMNLP 2022.

14) The Importance of Data

What happened? Data has long been a critical ingredient for ML but is typically overshadowed by advances in modelling. Given the importance of data for scaling up models, however, attention is slowly shifting from model-centric to data-centric approaches. Important topics include how to build and maintain new datasets efficiently and how to ensure data quality (see the Data-centric AI workshop at NeurIPS 2021 for an overview). In particular, large-scale datasets used by pre-trained models came under scrutiny this year including multi-modal datasets [104] as well as English and multilingual text corpora [105][106]. Such an analysis can inform the design of more representative resources such as MaRVL [107] for multi-modal reasoning.

Why is it important? Data is critically important for training large-scale ML models and a key factor in how models acquire new information. As models are scaled up, ensuring data quality at scale becomes more challenging.

What’s next? We currently lack best practices and principled methods regarding how to efficiently build datasets for different tasks, reliably ensure data quality, etc. It is also still poorly understood how data interacts with a model's learning and how the data shapes a model's biases. For instance, training data filtering may have negative effects on a language model's coverage of marginalized groups [90:1].

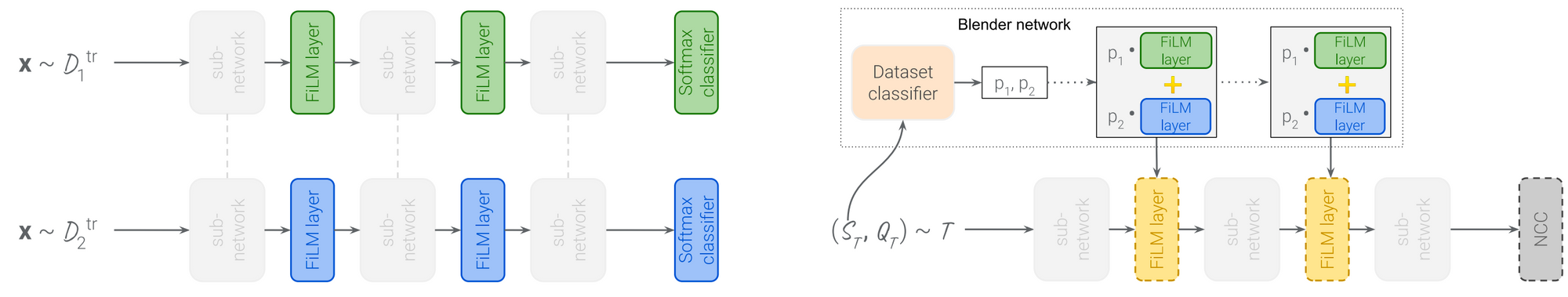

15) Meta-learning

What happened? Meta-learning and transfer learning, despite sharing the common goal of few-shot learning, have been studied mostly in distinct communitites. On a new benchmark [108], large-scale transfer learning methods outperform meta-learning-based approaches. A promising direction is to scale up meta-learning methods, which, combined with more memory-efficient training methods, can improve the performance of meta-learning models on real-world benchmarks [109]. Meta-learning methods can also be combined with efficient adaptation methods such as FiLM layers [110] to adapt a general model effectively to new datasets [111].

Why is it important? Meta-learning is an important paradigm but has fallen short of yielding state-of-the-art results on standard benchmarks that are not designed with meta-learning systems in mind. Bringing meta-learning and transfer learning communities closer together may lead to more practical meta-learnig methods that are useful in real-world applications.

What’s next? Meta-learning can be particularly useful when combined with the large number of natural tasks available for massive multi-task learning. Meta-learning can also help improve prompting by learning how to design or use prompts based on the large number of available prompts.

Citation

For attribution in academic contexts or books, please cite this work as:

Sebastian Ruder, "ML and NLP Research Highlights of 2021". http://ruder.io/ml-highlights-2021/, 2022.

BibTeX citation:

@misc{ruder2022mlhighlights,

author = {Ruder, Sebastian},

title = {{ML and NLP Research Highlights of 2021}},

year = {2022},

howpublished = {\url{http://ruder.io/ml-highlights-2021/}},

}

Credits

Thanks to Eleni Triantafillou and Dani Yogatama for thoughts and suggestions.

Bommasani, R., Hudson, D. A., Adeli, E., Altman, R., Arora, S., von Arx, S., … Liang, P. (2021). On the Opportunities and Risks of Foundation Models. http://arxiv.org/abs/2108.07258 ↩︎ ↩︎

Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T., … Houlsby, N. (2021). An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. In Proceedings of ICLR 2021. ↩︎

Zhai, X., Kolesnikov, A., Houlsby, N., & Beyer, L. (2021). Scaling Vision Transformers. http://arxiv.org/abs/2106.04560 ↩︎

He, K., Chen, X., Xie, S., Li, Y., Dollár, P., & Girshick, R. (2021). Masked Autoencoders Are Scalable Vision Learners. http://arxiv.org/abs/2111.06377 ↩︎

Goyal, P., Caron, M., Lefaudeux, B., Xu, M., Wang, P., Pai, V., … Bojanowski, P. (2021). Self-supervised Pretraining of Visual Features in the Wild. http://arxiv.org/abs/2103.01988 ↩︎

Baevski, A., Zhou, H., Mohamed, A., & Auli, M. (2020). wav2vec 2.0: A framework for self-supervised learning of speech representations. Advances in Neural Information Processing Systems, 2020. ↩︎

Chung, Y.-A., Zhang, Y., Han, W., Chiu, C.-C., Qin, J., Pang, R., & Wu, Y. (2021). W2v-BERT: Combining Contrastive Learning and Masked Language Modeling for Self-Supervised Speech Pre-Training. http://arxiv.org/abs/2108.06209 ↩︎

Babu, A., Wang, C., Tjandra, A., Lakhotia, K., Xu, Q., Goyal, N., … Auli, M. (2021). XLS-R: Self-supervised Cross-lingual Speech Representation Learning at Scale. http://arxiv.org/abs/2111.09296 ↩︎

Fu, T.-J., Li, L., Gan, Z., Lin, K., Wang, W. Y., Wang, L., & Liu, Z. (2021). VIOLET: End-to-End Video-Language Transformers with Masked Visual-token Modeling. http://arxiv.org/abs/2111.12681 ↩︎

Bapna, A., Chung, Y., Wu, N., Gulati, A., Jia, Y., Clark, J. H., … Zhang, Y. (2021). SLAM: A Unified Encoder for Speech and Language Modeling via Speech-Text Joint Pre-Training. http://arxiv.org/abs/2110.10329 ↩︎

Bugliarello, E., Cotterell, R., Okazaki, N., & Elliott, D. (2021). Multimodal pretraining unmasked: A meta-analysis and a unified framework of vision-and-language berts. Transactions of the Association for Computational Linguistics, 9, 978–994. https://doi.org/10.1162/tacl_a_00408 ↩︎

Hendricks, L. A., Mellor, J., Schneider, R., Alayrac, J. B., & Nematzadeh, A. (2021). Decoupling the role of data, attention, and losses in multimodal transformers. Transactions of the Association for Computational Linguistics, 9, 570–585. https://doi.org/10.1162/tacl_a_00385 ↩︎

Chen, L., Lu, K., Rajeswaran, A., Lee, K., Grover, A., Laskin, M., … Mordatch, I. (2021). Decision Transformer: Reinforcement Learning via Sequence Modeling. http://arxiv.org/abs/2106.01345 ↩︎

Jumper, J., Evans, R., Pritzel, A., Green, T., Figurnov, M., Ronneberger, O., ... & Hassabis, D. (2021). Highly accurate protein structure prediction with AlphaFold. Nature, 596(7873), 583-589. ↩︎ ↩︎

Abnar, S., Dehghani, M., Neyshabur, B., & Sedghi, H. (2021). Exploring the Limits of Large Scale Pre-training. http://arxiv.org/abs/2110.02095 ↩︎

Tay, Y., Dehghani, M., Rao, J., Fedus, W., Abnar, S., Chung, H. W., … Metzler, D. (2021). Scale Efficiently: Insights from Pre-training and Fine-tuning Transformers. http://arxiv.org/abs/2109.10686 ↩︎

Sanh, V., Webson, A., Raffel, C., Bach, S. H., Sutawika, L., Alyafeai, Z., … Rush, A. M. (2021). Multitask Prompted Training Enables Zero-Shot Task Generalization. http://arxiv.org/abs/2110.08207 ↩︎

Wei, J., Bosma, M., Zhao, V. Y., Guu, K., Yu, A. W., Lester, B., … Le, Q. V. (2021). Finetuned Language Models Are Zero-Shot Learners. http://arxiv.org/abs/2109.01652 ↩︎ ↩︎

Aribandi, V., Tay, Y., Schuster, T., Rao, J., Zheng, H. S., Mehta, S. V., … Metzler, D. (2021). ExT5: Towards Extreme Multi-Task Scaling for Transfer Learning. http://arxiv.org/abs/2111.10952 ↩︎

Bansal, T., Gunasekaran, K., Wang, T., Munkhdalai, T., & McCallum, A. (2021). Diverse Distributions of Self-Supervised Tasks for Meta-Learning in NLP. In Proceedings of EMNLP 2021 (pp. 5812–5824). https://doi.org/10.18653/v1/2021.emnlp-main.469 ↩︎

Min, S., Lewis, M., Zettlemoyer, L., & Hajishirzi, H. (2021). MetaICL: Learning to Learn In Context. http://arxiv.org/abs/2110.15943 ↩︎

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., … Polosukhin, I. (2017). Attention Is All You Need. In Proceedings of NIPS 2017. ↩︎

Jaegle, A., Gimeno, F., Brock, A., Zisserman, A., Vinyals, O., & Carreira, J. (2021). Perceiver: General Perception with Iterative Attention. In Proceedings of ICML 2021. http://arxiv.org/abs/2103.03206 ↩︎

Jaegle, A., Borgeaud, S., Alayrac, J.-B., Doersch, C., Ionescu, C., Ding, D., … Carreira, J. (2021). Perceiver IO: A General Architecture for Structured Inputs & Outputs. http://arxiv.org/abs/2107.14795 ↩︎

Tolstikhin, I., Houlsby, N., Kolesnikov, A., Beyer, L., Zhai, X., Unterthiner, T., … Dosovitskiy, A. (2021). MLP-Mixer: An all-MLP Architecture for Vision. http://arxiv.org/abs/2105.01601 ↩︎

Liu, H., Dai, Z., So, D. R., & Le, Q. V. (2021). Pay Attention to MLPs, (Mlm). Retrieved from http://arxiv.org/abs/2105.08050 ↩︎

Lee-Thorp, J., Ainslie, J., Eckstein, I., & Ontanon, S. (2021). FNet: Mixing Tokens with Fourier Transforms. http://arxiv.org/abs/2105.03824 ↩︎

Tay, Y., Dehghani, M., Gupta, J., Bahri, D., Aribandi, V., Qin, Z., & Metzler, D. (2021). Are Pre-trained Convolutions Better than Pre-trained Transformers? In Proceedings of ACL 2021. Retrieved from http://arxiv.org/abs/2105.03322 ↩︎

Clark, K., Luong, M.-T., Le, Q. V., & Manning, C. D. (2020). ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators. In Proceedings of ICLR 2020. ↩︎

He, P., Gao, J., & Chen, W. (2021). DeBERTaV3: Improving DeBERTa using ELECTRA-Style Pre-Training with Gradient-Disentangled Embedding Sharing. http://arxiv.org/abs/2111.09543 ↩︎

Agarwal, R., Melnick, L., Frosst, N., Zhang, X., Lengerich, B., Caruana, R., & Hinton, G. (2021). Neural Additive Models: Interpretable Machine Learning with Neural Nets. In Proceedings of NeurIPS 2021. http://arxiv.org/abs/2004.13912 ↩︎

Brown, T. B., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., … Amodei, D. (2020). Language Models are Few-Shot Learners. In Proceedings of NeurIPS 2020. http://arxiv.org/abs/2005.14165 ↩︎

Schick, T., & Schütze, H. (2021). Exploiting cloze questions for few shot text classification and natural language inference. In Proceedings of EACL 2021 (pp. 255–269). ↩︎

Schick, T., & Schütze, H. (2021). It’s Not Just Size That Matters: Small Language Models Are Also Few-Shot Learners. In Proceedings of NAACL 2021. http://arxiv.org/abs/2009.07118 ↩︎

Tam, D., Menon, R. R., Bansal, M., Srivastava, S., & Raffel, C. (2021). Improving and Simplifying Pattern Exploiting Training. http://arxiv.org/abs/2103.11955 ↩︎

Perez, E., Kiela, D., & Cho, K. (2021). True Few-Shot Learning with Language Models. In Proceedings of NeurIPS 2021. http://arxiv.org/abs/2105.11447 ↩︎

Zheng, Y., Zhou, J., Qian, Y., Ding, M., Li, J., Salakhutdinov, R., … Yang, Z. (2021). FewNLU: Benchmarking State-of-the-Art Methods for Few-Shot Natural Language Understanding. http://arxiv.org/abs/2109.12742 ↩︎

Liu, P., Yuan, W., Fu, J., Jiang, Z., Hayashi, H., & Neubig, G. (2021). Pre-train, Prompt, and Predict: A Systematic Survey of Prompting Methods in Natural Language Processing. http://arxiv.org/abs/2107.13586 ↩︎

Scao, T. Le, & Rush, A. M. (2021). How Many Data Points is a Prompt Worth? In Proceedings of NAACL 2021. http://arxiv.org/abs/2103.08493 ↩︎

Ratner, A., De Sa, C., Wu, S., Selsam, D., & Ré, C. (2016). Data Programming: Creating Large Training Sets, Quickly. In Advances in Neural Information Processing Systems 29 (NIPS 2016). http://arxiv.org/abs/1605.07723 ↩︎

Mishra, S., Khashabi, D., Baral, C., & Hajishirzi, H. (2021). Cross-Task Generalization via Natural Language Crowdsourcing Instructions. http://arxiv.org/abs/2104.08773 ↩︎

Wiegreffe, S., & Marasović, A. (2021). Teach Me to Explain: A Review of Datasets for Explainable Natural Language Processing. In 35th Conference on Neural Information Processing Systems (NeurIPS 2021) Track on Datasets and Benchmarks. http://arxiv.org/abs/2102.12060 ↩︎

Ma, X., Kong, X., Wang, S., Zhou, C., May, J., Ma, H., & Zettlemoyer, L. (2021). Luna: Linear Unified Nested Attention. In Proceedings of NeurIPS 2021. http://arxiv.org/abs/2106.01540 ↩︎

Peng, H., Pappas, N., Yogatama, D., Schwartz, R., Smith, N. A., & Lingpeng Kong. (2021). Random Feature Attention. In Proceedings of ICLR 2021. ↩︎

Tay, Y., Dehghani, M., Bahri, D., & Metzler, D. (2020). Efficient Transformers: A Survey. ArXiv Preprint ArXiv:2009.06732. Retrieved from http://arxiv.org/abs/2009.06732 ↩︎

Lester, B., Al-Rfou, R., & Constant, N. (2021). The Power of Scale for Parameter-Efficient Prompt Tuning. In Proceedings of EMNLP 2021. http://arxiv.org/abs/2104.08691 ↩︎

Liu, X., Zheng, Y., Du, Z., Ding, M., Qian, Y., Yang, Z., & Tang, J. (2021). GPT Understands, Too. http://arxiv.org/abs/2103.10385 ↩︎

Mao, Y., Mathias, L., Hou, R., Almahairi, A., Ma, H., Han, J., … Khabsa, M. (2021). UniPELT: A Unified Framework for Parameter-Efficient Language Model Tuning. http://arxiv.org/abs/2110.07577 ↩︎

He, J., Zhou, C., Ma, X., Berg-Kirkpatrick, T., & Neubig, G. (2021). Towards a Unified View of Parameter-Efficient Transfer Learning. http://arxiv.org/abs/2110.04366 ↩︎

Mahabadi, R. K., Henderson, J., & Ruder, S. (2021). Compacter: Efficient Low-Rank Hypercomplex Adapter Layers. In Proceedings of NeurIPS 2021. http://arxiv.org/abs/2106.04647 ↩︎

Tsimpoukelli, M., Menick, J., Cabi, S., Eslami, S. M. A., Vinyals, O., & Hill, F. (2021). Multimodal Few-Shot Learning with Frozen Language Models. In Proceedings of NeurIPS 2021. http://arxiv.org/abs/2106.13884 ↩︎

Pfeiffer, J., Vulić, I., Gurevych, I., & Ruder, S. (2020). MAD-X: An Adapter-based Framework for Multi-task Cross-lingual Transfer. In Proceedings of EMNLP 2020. ↩︎

Eichenberg, C., Black, S., Weinbach, S., Parcalabescu, L., & Frank, A. (2021). MAGMA--Multimodal Augmentation of Generative Models through Adapter-based Finetuning. https://arxiv.org/abs/2112.05253 ↩︎

Dettmers, T., Lewis, M., Shleifer, S., & Zettlemoyer, L. (2021). 8-bit Optimizers via Block-wise Quantization. http://arxiv.org/abs/2110.02861 ↩︎

Koch, B., Denton, E., Hanna, A., & Foster, J. G. (2021). Reduced, Reused and Recycled: The Life of a Dataset in Machine Learning Research. In 35th Conference on Neural Information Processing Systems (NeurIPS 2021) Track on Datasets and Benchmarks. http://arxiv.org/abs/2112.01716 ↩︎

Kiela, D., Bartolo, M., Nie, Y., Kaushik, D., Geiger, A., Wu, Z., … Williams, A. (2021). Dynabench: Rethinking Benchmarking in NLP. In Proceedings of NAACL 2021 (pp. 4110–4124). https://doi.org/10.18653/v1/2021.naacl-main.324 ↩︎

Liu, P., Fu, J., Xiao, Y., Yuan, W., Chang, S., Dai, J., … Neubig, G. (2021). ExplainaBoard: An Explainable Leaderboard for NLP. In Proceedings of ACL 2021: System demonstrations (pp. 280–289). ↩︎

Ma, Z., Ethayarajh, K., Thrush, T., Jain, S., Wu, L., Jia, R., … Kiela, D. (2021). Dynaboard: An Evaluation-As-A-Service Platform for Holistic Next-Generation Benchmarking. http://arxiv.org/abs/2106.06052 ↩︎

Bragg, J., Cohan, A., Lo, K., & Beltagy, I. (2021). FLEX: Unifying Evaluation for Few-Shot NLP. In Proceedings of NeurIPS 2021. Retrieved from http://arxiv.org/abs/2107.07170 ↩︎

Ye, Q., Lin, B. Y., & Ren, X. (2021). CrossFit: A Few-shot Learning Challenge for Cross-task Generalization in NLP. In Proceedings of EMNLP 2021. ↩︎

Koh, P. W., Sagawa, S., Marklund, H., Xie, S. M., Zhang, M., Balsubramani, A., … Liang, P. (2021). WILDS: A Benchmark of in-the-Wild Distribution Shifts. In Proceedings of ICML 2021. http://arxiv.org/abs/2012.07421 ↩︎

Yang, S., Chi, P.-H., Chuang, Y.-S., Lai, C.-I. J., Lakhotia, K., Lin, Y. Y., … Lee, H. (2021). SUPERB: Speech processing Universal PERformance Benchmark. In Proceedings of Interspeech 2021. http://arxiv.org/abs/2105.01051 ↩︎

Cahyawijaya, S., Winata, G. I., Wilie, B., Vincentio, K., Li, X., Kuncoro, A., … Fung, P. (2021). IndoNLG: Benchmark and Resources for Evaluating Indonesian Natural Language Generation. In Proceedings of EMNLP 2021 (pp. 8875–8898). https://doi.org/10.18653/v1/2021.emnlp-main.699 ↩︎

Dumitrescu, S., Rebeja, P., Rosia, L., Marchidan, G., Yogatama, D., Avram, A., … Morogan, L. (2021). LiRo: Benchmark and leaderboard for Romanian language tasks. In 35th Conference on Neural Information Processing Systems (NeurIPS 2021) Track on Datasets and Benchmarks. ↩︎

Tamkin, A., Liu, V., Lu, R., Fein, D., Schultz, C., & Goodman, N. (2021). DABS: A Domain-Agnostic Benchmark for Self-Supervised Learning. In 35th Conference on Neural Information Processing Systems (NeurIPS 2021) Track on Datasets and Benchmarks. http://arxiv.org/abs/2111.12062 ↩︎

Ruder, S., Constant, N., Botha, J., Siddhant, A., Firat, O., Fu, J., … Johnson, M. (2021). XTREME-R: Towards More Challenging and Nuanced Multilingual Evaluation. In Proceedings of EMNLP 2021. http://arxiv.org/abs/2104.07412 ↩︎

Marie, B., Fujita, A., & Rubino, R. (2021). Scientific Credibility of Machine Translation Research: A Meta-Evaluation of 769 Papers. In Proceedings of ACL 2021 (pp. 7297–7306). https://doi.org/10.18653/v1/2021.acl-long.566 ↩︎

Gehrmann, S., Adewumi, T., Aggarwal, K., Ammanamanchi, P. S., Anuoluwapo, A., Bosselut, A., … Zhou, J. (2021). The GEM Benchmark: Natural Language Generation, its Evaluation and Metrics. http://arxiv.org/abs/2102.01672 ↩︎

Kasai, J., Sakaguchi, K., Bras, R. Le, Dunagan, L., Morrison, J., Fabbri, A. R., … Smith, N. A. (2021). Bidimensional Leaderboards: Generate and Evaluate Language Hand in Hand. http://arxiv.org/abs/2112.04139 ↩︎

Ramesh, A., Pavlov, M., Goh, G., Gray, S., Voss, C., Radford, A., … Sutskever, I. (2021). Zero-Shot Text-to-Image Generation. https://arxiv.org/abs/2102.12092 ↩︎

Esser, P., Rombach, R., & Ommer, B. (2020). Taming Transformers for High-Resolution Image Synthesis. https://arxiv.org/abs/2012.09841 ↩︎

Radford, A., Kim, J. W., Hallacy, C., Ramesh, A., Goh, G., Agarwal, S., … Sutskever, I. (2021). Learning Transferable Visual Models From Natural Language Supervision. http://arxiv.org/abs/2103.00020 ↩︎

Dhariwal, P., & Nichol, A. (2021). Diffusion Models Beat GANs on Image Synthesis. https://arxiv.org/abs/2105.05233 ↩︎

Nichol, A., Dhariwal, P., Ramesh, A., Shyam, P., Mishkin, P., McGrew, B., … Chen, M. (2021). GLIDE: Towards Photorealistic Image Generation and Editing with Text-Guided Diffusion Models. http://arxiv.org/abs/2112.10741 ↩︎

Ravuri, S., Lenc, K., Willson, M., Kangin, D., Lam, R., Mirowski, P., … & Mohamed, S. (2021). Skillful Precipitation Nowcasting using Deep Generative Models of Radar. Nature, 597. https://www.nature.com/articles/s41586-021-03854-z ↩︎

Espeholt, L., Agrawal, S., Sønderby, C., Kumar, M., Heek, J., Bromberg, C., … Kalchbrenner, N. (2021). Skillful Twelve Hour Precipitation Forecasts using Large Context Neural Networks, 1–34. Retrieved from http://arxiv.org/abs/2111.07470 ↩︎

Davies, A., Veličković, P., Buesing, L., Blackwell, S., Zheng, D., Tomašev, N., … Kohli, P. (2021). Advancing mathematics by guiding human intuition with AI. Nature, 600(7887), 70–74. https://doi.org/10.1038/s41586-021-04086-x ↩︎

Charton, F., Hayat, A., & Lample, G. (2021). Deep Differential System Stability Learning advanced computations from examples. In Proceedings of ICLR 2021. ↩︎

Chen, M., Tworek, J., Jun, H., Yuan, Q., Ponde, H., Kaplan, J., … Zaremba, W. (2021). Evaluating Large Language Models Trained on Code. Retrieved from http://arxiv.org/abs/2107.03374 ↩︎

Roziere, B., Marc, M. L., & Guillaume, S. (2021). DOBF: A Deobfuscation Pre-Training Objective for Programming Languages. In Proceedings of NeurIPS 2021. ↩︎

Jain, P., Jain, A., Zhang, T., Abbeel, P., Gonzalez, J. E., & Stoica, I. (2021). Contrastive Code Representation Learning. In Proceedings of EMNLP 2021. ↩︎

Elnaggar, A., Gibbs, T., & Matthes, F. (2021). CodeTrans: Towards Cracking the Language of Silicone’s Code Through Self-Supervised Deep Learning and High Performance Computing. ↩︎

Austin, J., Odena, A., Nye, M., Bosma, M., Michalewski, H., Dohan, D., … Sutton, C. (2021). Program Synthesis with Large Language Models, 1–34. http://arxiv.org/abs/2108.07732 ↩︎

Nye, M., Andreassen, A. J., Gur-Ari, G., Michalewski, H., Austin, J., Bieber, D., … Odena, A. (2021). Show Your Work: Scratchpads for Intermediate Computation with Language Models, 1–16. http://arxiv.org/abs/2112.00114 ↩︎

Xu, F. F., Vasilescu, B., & Neubig, G. (2021). In-IDE Code Generation from Natural Language: Promise and Challenges. ACM Transactions on Software Engineering and Methodology. ↩︎

Bender, E., Gebru, T., McMillan-Major, A., & Shmitchell, S. (2021). On the dangers of stochastic parrots: can language models be too big? In FAccT 2021 - Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency. Association for Computing Machinery. https://doi.org/10.1145/3442188.3445922 ↩︎

Weidinger, L., Mellor, J., Rauh, M., Griffin, C., Uesato, J., Huang, P.-S., … Gabriel, I. (2021). Ethical and social risks of harm from Language Models. ↩︎

Liu, R., Jia, C., Wei, J., Xu, G., Wang, L., & Vosoughi, S. (2021). Mitigating Political Bias in Language Models Through Reinforced Calibration. In Proceedings of AAAI 2021. ↩︎

Ahn, J., & Oh, A. (2021). Mitigating Language-Dependent Ethnic Bias in BERT. In Proceedings of EMNLP 2021. https://doi.org/10.18653/v1/2021.emnlp-main.42 ↩︎

Welbl, J., Glaese, A., Uesato, J., Dathathri, S., Mellor, J., Hendricks, L. A., … Huang, P.-S. (2021). Challenges in Detoxifying Language Models. In Findings of EMNLP 2021 (pp. 2447–2469). http://arxiv.org/abs/2109.07445 ↩︎ ↩︎

Borgeaud, S., Mensch, A., Hoffmann, J., Cai, T., Rutherford, E., Millican, K., … Sifre, L. (2021). Improving language models by retrieving from trillions of tokens. http://arxiv.org/abs/2112.04426 ↩︎

Komeili, M., Shuster, K., & Weston, J. (2021). Internet-Augmented Dialogue Generation. ↩︎

Nakano, R., Hilton, J., Balaji, S., Wu, J., Ouyang, L., Kim, C., … Schulman, J. (2021). WebGPT: Browser-assisted question-answering with human feedback. http://arxiv.org/abs/2112.09332 ↩︎

Sachan, D. S., Reddy, S., Hamilton, W., Dyer, C., & Yogatama, D. (2021). End-to-End Training of Multi-Document Reader and Retriever for Open-Domain Question Answering. In Proceedings of NeurIPS 2021. http://arxiv.org/abs/2106.05346 ↩︎

Yogatama, D., D’autume, C. de M., & Kong, L. (2021). Adaptive semiparametric language models. Transactions of the Association for Computational Linguistics, 9, 362–373. https://doi.org/10.1162/tacl_a_00371 ↩︎

Khandelwal, U., Levy, O., Jurafsky, D., Zettlemoyer, L., & Lewis, M. (2020). Generalization through Memorization: Nearest Neighbor Language Models. In Proceedings of ICLR 2020. http://arxiv.org/abs/1911.00172 ↩︎

Xue, L., Barua, A., Constant, N., Al-Rfou, R., Narang, S., Kale, M., … Raffel, C. (2021). ByT5: Towards a token-free future with pre-trained byte-to-byte models. ArXiv Preprint ArXiv:2105.13626. ttp://arxiv.org/abs/2105.13626 ↩︎

Clark, J. H., Garrette, D., Turc, I., & Wieting, J. (2021). Canine: Pre-training an Efficient Tokenization-Free Encoder for Language Representation. https://arxiv.org/abs/2103.06874 ↩︎

Tay, Y., Tran, V. Q., Ruder, S., Gupta, J., Chung, H. W., Bahri, D., … Metzler, D. (2021). Charformer: Fast Character Transformers via Gradient-based Subword Tokenization. http://arxiv.org/abs/2106.12672 ↩︎

Lazaridou, A., Kuncoro, A., & Gribovskaya, E. (2021). Mind the Gap : Assessing Temporal Generalization in Neural Language Models. In Proceedings of NeurIPS 2021. ↩︎

Röttger, P., & Pierrehumbert, J. B. (2021). Temporal Adaptation of BERT and Performance on Downstream Document Classification: Insights from Social Media. In Findings of EMNLP 2021 (pp. 2400–2412). https://doi.org/10.18653/v1/2021.findings-emnlp.206 ↩︎

Dhingra, B., Cole, J. R., Eisenschlos, J. M., Gillick, D., Eisenstein, J., & Cohen, W. W. (2021). Time-Aware Language Models as Temporal Knowledge Bases. http://arxiv.org/abs/2106.15110 ↩︎

Zhang, M. J. Q., & Choi, E. (2021). SituatedQA: Incorporating Extra-Linguistic Contexts into QA. In Proceedings of EMNLP 2021. https://doi.org/10.18653/v1/2021.emnlp-main.586 ↩︎

Birhane, A., Prabhu, V. U., & Kahembwe, E. (2021). Multimodal datasets: misogyny, pornography, and malignant stereotypes. http://arxiv.org/abs/2110.01963 ↩︎

Dodge, J., Sap, M., Marasović, A., Agnew, W., Ilharco, G., Groeneveld, D., & Gardner, M. (2021). Documenting the English Colossal Clean Crawled Corpus. In Proceedings of EMNLP 2021. ↩︎

Kreutzer, J., Caswell, I., Wang, L., Wahab, A., Esch, D. van, Ulzii-Orshikh, N., … Adeyemi, M. (2021). Quality at a Glance: An Audit of Web-Crawled Multilingual Datasets. In Transactions of the ACL 2021. ↩︎

Liu, F., Bugliarello, E., Ponti, E. M., Reddy, S., Collier, N., & Elliott, D. (2021). Visually Grounded Reasoning across Languages and Cultures. In Proceedings of EMNLP 2021. https://arxiv.org/abs/2109.13238 ↩︎

Dumoulin, V., Houlsby, N., Evci, U., Zhai, X., Goroshin, R., Gelly, S., & Larochelle, H. (2021). Comparing Transfer and Meta Learning Approaches on a Unified Few-Shot Classification Benchmark. In 35th Conference on Neural Information Processing Systems (NeurIPS 2021) Track on Datasets and Benchmarks. http://arxiv.org/abs/2104.02638 ↩︎

Bronskill, J., Massiceti, D., Patacchiola, M., Hofmann, K., Nowozin, S., & Turner, R. E. (2021). Memory Efficient Meta-Learning with Large Images, (NeurIPS). http://arxiv.org/abs/2107.01105 ↩︎

Perez, E., Strub, F., De Vries, H., Dumoulin, V., & Courville, A. (2018). FiLM: Visual reasoning with a general conditioning layer. In 32nd AAAI Conference on Artificial Intelligence, AAAI 2018 (pp. 3942–3951). ↩︎

Triantafillou, E., Larochelle, H., Zemel, R., & Dumoulin, V. (2021). Learning a Universal Template for Few-shot Dataset Generalization. In Proceedings of ICML 2021. http://arxiv.org/abs/2105.07029 ↩︎